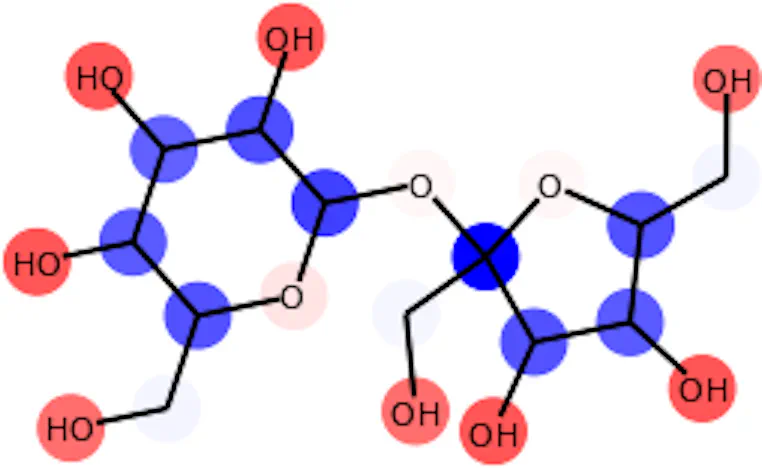

Positive and negative attributions for the solubility of sucrose

Positive and negative attributions for the solubility of sucroseAbstract

Graph Networks are used to make decisions in potentially complex scenarios but it is usually not obvious how or why they made them. In this work, we study the explainability of Graph Network decisions using two main classes of techniques, gradient-based and decomposition-based, on a toy dataset and a chemistry task. Our study sets the ground for future development as well as application to real-world problems.

Type

Publication

International Conference on Machine Learning 2019 - Spotlight talk at the “Learning and Reasoning with Graph-Structured Representations” workshop